The Hi-Audio ERC project has published a new peer-reviewed article presenting the Hi-Audio online platform (https://hiaudio.fr/) — an open-source web-based platform designed to support collaborative multitrack music recording, annotation, and sharing for scientific research.

The article appears in the EURASIP Journal on Audio, Speech, and Music Processing and is available open access via Springer.

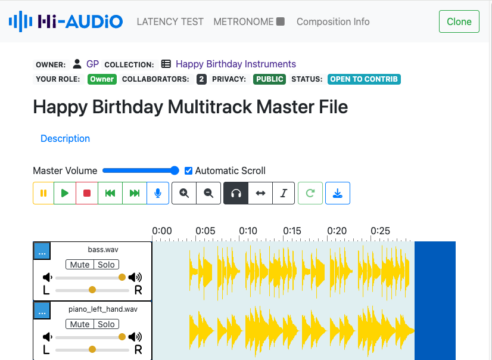

The Hi-Audio multitrack editor interface, showing the composition view with recording controls, waveform display, and collaboration status indicators.

Hi-Audio offers a complete browser-based workflow for collaborative multitrack music recording and dataset building. A detailed how-to guide is available for new users. Key features include:

One of the persistent challenges in Music Information Retrieval (MIR) research is the scarcity of suitable open datasets — particularly annotated, multitrack recordings spanning a broad range of instruments and musical traditions. While the availability of data is essential for training and evaluating AI models for tasks such as source separation, instrument recognition, or music generation, existing collections tend to be limited in scope, predominantly focused on Western genres, and often subject to copyright restrictions that hinder open sharing.

The Hi-Audio project, launched in October 2022 and supported by the European Research Council (ERC, grant 101052978), was conceived to directly address this gap. The platform was officially presented as a Late-Breaking Demo at ISMIR 2023 in Milan, marking its first public introduction to the international MIR community.

The landscape of Digital Audio Workstations (DAWs) available online has grown significantly in recent years, including both commercial and open-source solutions. Hi-Audio distinguishes itself by focusing specifically on the structured collection of annotated multitrack data for scientific research.

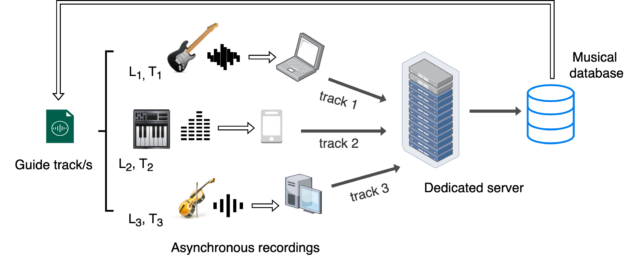

Unlike streaming-oriented platforms or real-time performance tools, Hi-Audio is designed for asynchronous, distributed recording. Musicians from different locations can contribute individual instrument tracks to a shared composition over time, using nothing more than a web browser and a microphone. All source code is publicly available across the project’s GitHub repositories, and music data published through the platform is released under Creative Commons licensing.

Overview of the Hi-Audio recording scheme. Musicians at different locations (L1, L2, L3) and time points (T1, T2, T3) record individual tracks asynchronously, using a shared guide track as reference. All data is stored and annotated on a dedicated server and fed into an open musical database.

For a broader overview of the platform’s positioning and goals, see also our earlier post: Bridging Music and Research.

Collections such as Happy Birthday — recorded in different languages and with various instruments — and Au clair de la lune illustrate this collaborative model in action, both built by cloning a base composition template and inviting contributions from multiple musicians. Listen to example outputs directly below.

🎂 Happy Birthday to You entered the public domain in 2016 following a landmark court ruling — making it one of the most recognisable songs freely available for open sharing and research.

🌙 Au clair de la lune holds a unique place in audio history: a recording made on a phonautograph in 1860 was rediscovered in 2008, making it the oldest surviving recorded melody.

The platform introduces several key technical features that support both its architecture and real-world use.

Automatic metadata annotation. When audio files are uploaded, the platform automatically processes them server-side using a combination of signal processing and machine learning tools. Extracted descriptors include musical features such as tempo (BPM), tonality (key and scale), instrument label, and percussion detection, alongside low-level audio file metadata including sample rate, bit rate, channel layout, codec, and duration.

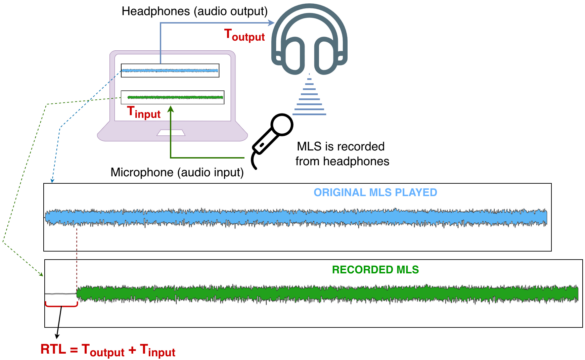

Browser-based round-trip latency estimation. A technically novel contribution of the platform is its built-in method for estimating and compensating recording latency directly within the browser. The approach, based on Maximum Length Sequences (MLS) — a technique adapted from room acoustics — enables accurate temporal alignment of recorded tracks across different devices, browsers, and operating systems. This method was presented at the Web Audio Conference 2025 (WAC 2025) and received the Best Poster Award.

Diagram illustrating the MLS-based round-trip latency estimation procedure. The MLS signal is played through headphones and simultaneously captured by the microphone; the measured delay (RTL = T_output + T_input) is used to compensate for timing offsets during recording.

Collaboration, roles, and privacy. The platform supports fine-grained access control through hierarchical user roles — owner, admin, member, and guest — and three composition visibility levels: public, registered users only, and private. This makes the system flexible enough to support both open crowdsourcing and controlled research data collection.

A preliminary usability study was conducted in collaboration with La Scène, the student music association at Télécom Paris, involving 22 amateur musicians. Participants completed a set of tasks covering the platform’s core functionalities under realistic, self-directed conditions. Core recording tasks achieved high completion rates, while areas such as metadata annotation and collaboration management were identified as requiring interface improvements. The full results are available here.

Building on this preliminary study, a practice-based evaluation is currently underway with the FLO Lab (Female Laptop Orchestra) — a collective of musicians, composers, and researchers specialising in laptop-based and electroacoustic performance across multiple institutions. As technically literate, geographically dispersed practitioners, they represent an ideal user group for evaluating the platform under real-world collaborative conditions. Preliminary results are publicly accessible.

Together, these evaluation efforts not only inform the platform’s ongoing development but also underscore its potential as a research tool across diverse musical contexts and communities.

Beyond its technical contributions, Hi-Audio helps lower the barriers to collaborative music creation and data sharing. By making these tools available directly in the browser, it enables musicians, researchers, and educators to participate more easily in building open and diverse music datasets.

Looking ahead, the team outlines several directions for future development, including a search engine for dataset content retrieval, content moderation tools for open collaborative compositions, and richer temporally-aligned annotations covering rhythmic patterns, harmonic structures, and other salient musical features. These enhancements are expected to further consolidate the platform’s utility for research in source separation, generative audio modelling, automatic mixing, and related MIR tasks.

The Hi-Audio platform is freely accessible — no installation required, just a web browser and a microphone. Whether you are a musician looking to contribute recordings, a researcher exploring the dataset, or simply curious, try it now or get in touch with the team. À bientôt! 🎵

This work was funded by the European Union (ERC, Hi-Audio, 101052978). Views expressed are those of the authors only and do not necessarily reflect those of the European Union or the European Research Council.